Objective

Audience reviews can be nuanced, some movies are perceived as overhyped, while others may be underrated. This project investigates whether IMDb ratings align with the underlying sentiment expressed in user reviews by leveraging a BERT-based sentiment model and statistical analysis.

Approach & Methodology

1. Data Source and Preprocessing

Movie metadata and user reviews were scraped from IMDb using a custom Python pipeline built with Selenium, BeautifulSoup, and Requests.

- 150 movies (top movies 2020–2025)

- ~3,700 user reviews (25 relevant reviews per movie)

- Key fields: imdb_id, rating, genre, review text

Preprocessing : Text cleaning included removal of escape sequences and formatting artifacts to ensure compatibility with the sentiment model.

2. BERT Sentiments

The BERT model used here is - nlptown/bert-base-multilingual-uncased-sentiment. Running through hugging face api consumed a lot time, therefore had to run it on kaggle using GPU. The model results a dictionary with keys as rating and value as corresponding probability.

3. Aggregating Sentiments

Weighted mean for sentiment score was calculated and multiplied to 2 to make it out 10 rating. Grouping each movie and aggregating bert-sentiment by mean then joining it with the movies.csv resulted in our final dataset to be used for analysis.

A deviation column that is basically. round(actual rating - bert-rating,2)

The resulting dataset is final and will be used for analysis.

Results & Insights

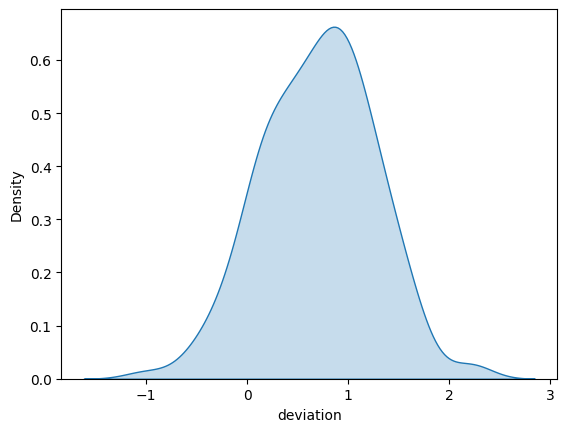

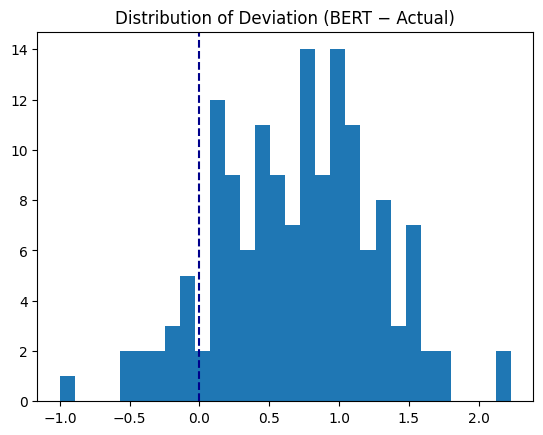

- The deviation between IMDb rating and BERT-derived sentiment approximately follows a normal distribution (mean = 0.7).

- Minimum deviation is -1 while maximum is 2.3

- Analysing deviation by genre

- Drama-Sport genre showed highest positive (maybe due to high mismatch of rating of F1 movie)

- Adventure-Crime genre showed most negative deviation

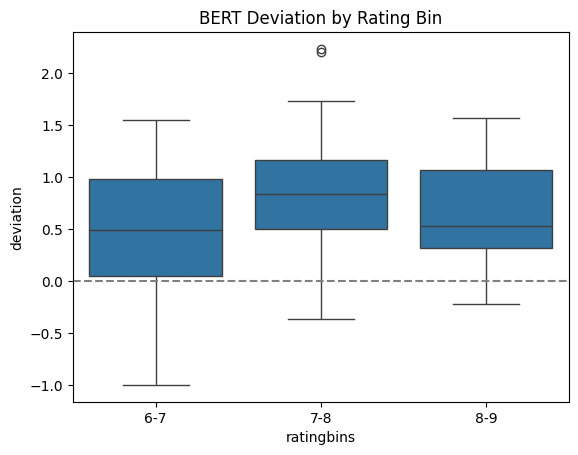

- Analyising deviation by ratings

- movies rated 7-8 were found to have more deviation on average, meaning average-above average movies are most opiniated

- Are BERT rating different from user ratings:

- Null hypothesis being rating isn’t different, we get p value as 0 . Hence, rejecting null hypothesis and safely say there is a mismatch in rating and sentiment.

- Analysis of Variance:

- By genre : p>0.05 so not significance variance among genre for deviation

- By rating-bins: p<0.05, indicates rating bins have variance in deviation from actual rating among them.

- Tuckey’s Test to find the rating bins having significant variance. Results showed 6-7 and 7-8 rated movies were significantly varying in terms of deviation from the review sentiment.